Chapter 3

Information Is a Fundamental Entity

Many scientists therefore justly regard information as the third fundamental entity alongside matter and energy.

3.1 Information: A Fundamental Quantity

The trail-blazing discoveries about the nature of energy in the 19th century caused the first technological revolution, when manual labor was replaced on a large scale by technological appliances—machines which could convert energy. In the same way, knowledge concerning the nature of information in our time initiated the second technological revolution where mental “labor” is saved through the use of technological appliances—namely, data processing machines. The concept “information” is not only of prime importance for informatics theories and communication techniques, but it is a fundamental quantity in such wide-ranging sciences as cybernetics, linguistics, biology, history, and theology. Many scientists, therefore, justly regard information as the third fundamental entity alongside matter and energy.

Claude E. Shannon was the first researcher who tried to define information mathematically. The theory based on his findings had the advantages that different methods of communication could be compared and that their performance could be evaluated. In addition, the introduction of the bit as a unit of information made it possible to describe the storage requirements of information quantitatively. The main disadvantage of Shannon’s definition of information is that the actual contents and impact of messages were not investigated. Shannon’s theory of information, which describes information from a statistical viewpoint only, is discussed fully in the appendix (chapter A1).

The true nature of information will be discussed in detail in the following chapters, and statements will be made about information and the laws of nature. After a thorough analysis of the information concept, it will be shown that the fundamental theorems can be applied to all technological and biological systems and also to all communication systems, including such diverse forms as the gyrations of bees and the message of the Bible. There is only one prerequisite—namely, that the information must be in coded form.

Since the concept of information is so complex that it cannot be defined in one statement (see Figure 12), we will proceed as follows: We will formulate various special theorems which will gradually reveal more information about the “nature” of information, until we eventually arrive at a precise definition (compare chapter 5). Any repetitions found in the contents of some theorems (redundance) is intentional, and the possibility of having various different formulations according to theorem N8 (paragraph 2.3), is also employed.

3.2 Information: A Material or a Mental Quantity

We have indicated that Shannon’s definition of information encompasses only a very minor aspect of information. Several authors have repeatedly pointed out this defect, as the following quotations show:

Karl Steinbuch, a German information scientist [S11]: “The classical theory of information can be compared to the statement that one kilogram of gold has the same value as one kilogram of sand.”

Warren Weaver, an American information scientist [S7]: “Two messages, one of which is heavily loaded with meaning and the other which is pure nonsense, can be exactly equivalent . . . as regards information.”

Ernst von Weizsäcker [W3]: “The reason for the ‘uselessness’ of Shannon’s theory in the different sciences is frankly that no science can limit itself to its syntactic level.”1

The essential aspect of each and every piece of information is its mental content, and not the number of letters used. If one disregards the contents, then Jean Cocteau’s facetious remark is relevant: “The greatest literary work of art is basically nothing but a scrambled alphabet.”

At this stage we want to point out a fundamental fallacy that has already caused many misunderstandings and has led to seriously erroneous conclusions, namely the assumption that information is a material phenomenon. The philosophy of materialism is fundamentally predisposed to relegate information to the material domain, as is apparent from philosophical articles emanating from the former DDR (East Germany) [S8 for example]. Even so, the former East German scientist J. Peil [P2] writes: “Even the biology based on a materialistic philosophy, which discarded all vitalistic and metaphysical components, did not readily accept the reduction of biology to physics. . . . Information is neither a physical nor a chemical principle like energy and matter, even though the latter are required as carriers.”

Also, according to a frequently quoted statement by the American mathematician Norbert Wiener (1894–1964) information cannot be a physical entity [W5]: “Information is information, neither matter nor energy. Any materialism which disregards this, will not survive one day.”

Werner Strombach, a German information scientist of Dortmund [S12], emphasizes the nonmaterial nature of information by defining it as an “enfolding of order at the level of contemplative cognition.”

The German biologist G. Osche [O3] sketches the unsuitability of Shannon’s theory from a biological viewpoint, and also emphasizes the nonmaterial nature of information: “While matter and energy are the concerns of physics, the description of biological phenomena typically involves information in a functional capacity. In cybernetics, the general information concept quantitatively expresses the information content of a given set of symbols by employing the probability distribution of all possible permutations of the symbols. But the information content of biological systems (genetic information) is concerned with its ‘value’ and its ‘functional meaning,’ and thus with the semantic aspect of information, with its quality.”

Hans-Joachim Flechtner, a German cyberneticist, referred to the fact that information is of a mental nature, both because of its contents and because of the encoding process. This aspect is, however, frequently underrated [F3]: “When a message is composed, it involves the coding of its mental content, but the message itself is not concerned about whether the contents are important or unimportant, valuable, useful, or meaningless. Only the recipient can evaluate the message after decoding it.”

3.3 Information: Not a Property of Matter!

It should now be clear that information, being a fundamental entity, cannot be a property of matter, and its origin cannot be explained in terms of material processes. We therefore formulate the following fundamental theorem:

Theorem 1: The fundamental quantity information is a non-material (mental) entity. It is not a property of matter, so that purely material processes are fundamentally precluded as sources of information.

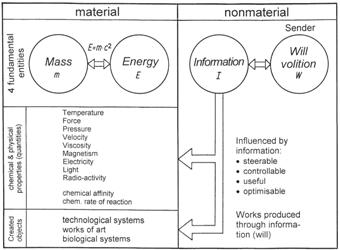

Figure 8 illustrates the known fundamental entities—mass, energy, and information. Mass and energy are undoubtedly of a material-physical nature, and for both of them important conservation laws play a significant role in physics and chemistry and in all derived applied sciences. Mass and energy are linked by means of Einstein’s equivalence formula, E = m x c2. In the left part of Figure 8, some of the many chemical and physical properties of matter in all its forms are illustrated, together with the defined units. The right hand part of Figure 8 illustrates nonmaterial properties and quantities, where information, I, belongs.

What is the causative factor for the existence of information? What prompts us to write a letter, a postcard, a note of felicitation, a diary, or a comment in a file? The most important prerequisite is our own volition, or that of a supervisor. In analogy to the material side, we now introduce a fourth fundamental entity, namely “will” (volition), W. Information and volition are closely linked, but this relationship cannot be expressed in a formula because both are of a nonmaterial (mental, intellectual, spiritual) nature. The connecting arrows indicate the following: Information is always based on the will of a sender who issues the information. It is a variable quantity depending on intentional conditions. Will itself is also not constant, but can in its turn be influenced by the information received from another sender. Conclusion:

Theorem 2: Information only arises through an intentional, volitional act.

Figure 8: The four fundamental entities are mass and energy (material) and information and will (nonmaterial). Mass and energy comprise the fundamental quantities of the physical world; they are linked through the well-known Einstein equation, E = m x c2. On the nonmaterial side we also have two fundamental entities, namely information and volition, which are closely linked. Information can be stored in physical media and used to steer, control, and optimize material processes. All created systems originate through information. A creative source of information is always linked to the volitional intent of a person; this fact demonstrates the nonmaterial nature of information.

It is clear from Figure 8 that the nonmaterial entity information can influence the material quantities. Electrical, mechanical, or chemical quantities can be steered, controlled, utilized, or optimized by means of intentional information. The strategy for achieving such control is always based on information, whether it is a cybernetic manufacturing technique, instructions for building an economical car, or the utilization of electricity for driving a machine. In the first place, there must be the intention to solve a problem, followed by a conceptual construct for which the information may be coded in the form of a program, a technical drawing, or a description, etc. The next step is then to implement the concept. All technological systems as well as all constructed objects, from pins to works of art, have been produced by means of information. None of these artifacts came into existence through some form of self-organization of matter, but all of them were preceded by establishing the required information. We can now conclude that information was present in the beginning, as the title of this book states.

Theorem 3: Information comprises the nonmaterial foundation for all technological systems and for all works of art.

What is the position in regard to biological systems? Does theorem 3 also hold for such systems, or is there some restriction? If we could successfully formulate the theorems in such a way that they are valid as laws of nature, then they would be universally valid according to the essential characteristics of the laws of nature, N2, N3, and N4.

In the Beginning Was Information

Between the covers of this book may well be the most devastating scientific argument against the idea that life could form by natural processes.

Read OnlineFootnotes

- Many authors erroneously elevate Shannon’s information theory to the syntactic level. This is, however, not justified in the light of appendix A1, since it comprises only the statistical aspects of a message without regard to syntactic rules.

Recommended Resources

Answers in Genesis is an apologetics ministry, dedicated to helping Christians defend their faith and proclaim the good news of Jesus Christ.

- Customer Service 800.778.3390

- Available Monday–Friday | 9 AM–5 PM ET

- © 2026 Answers in Genesis